OpenClaw Memory System: How AI Agents Remember Context

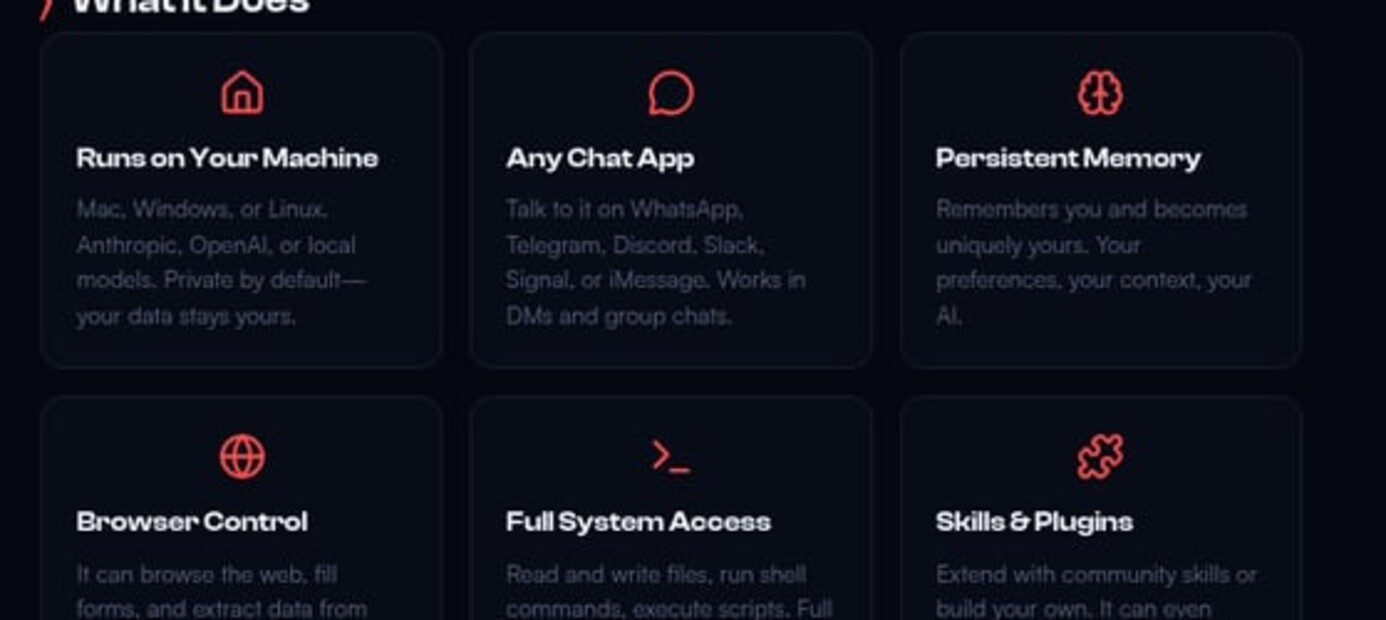

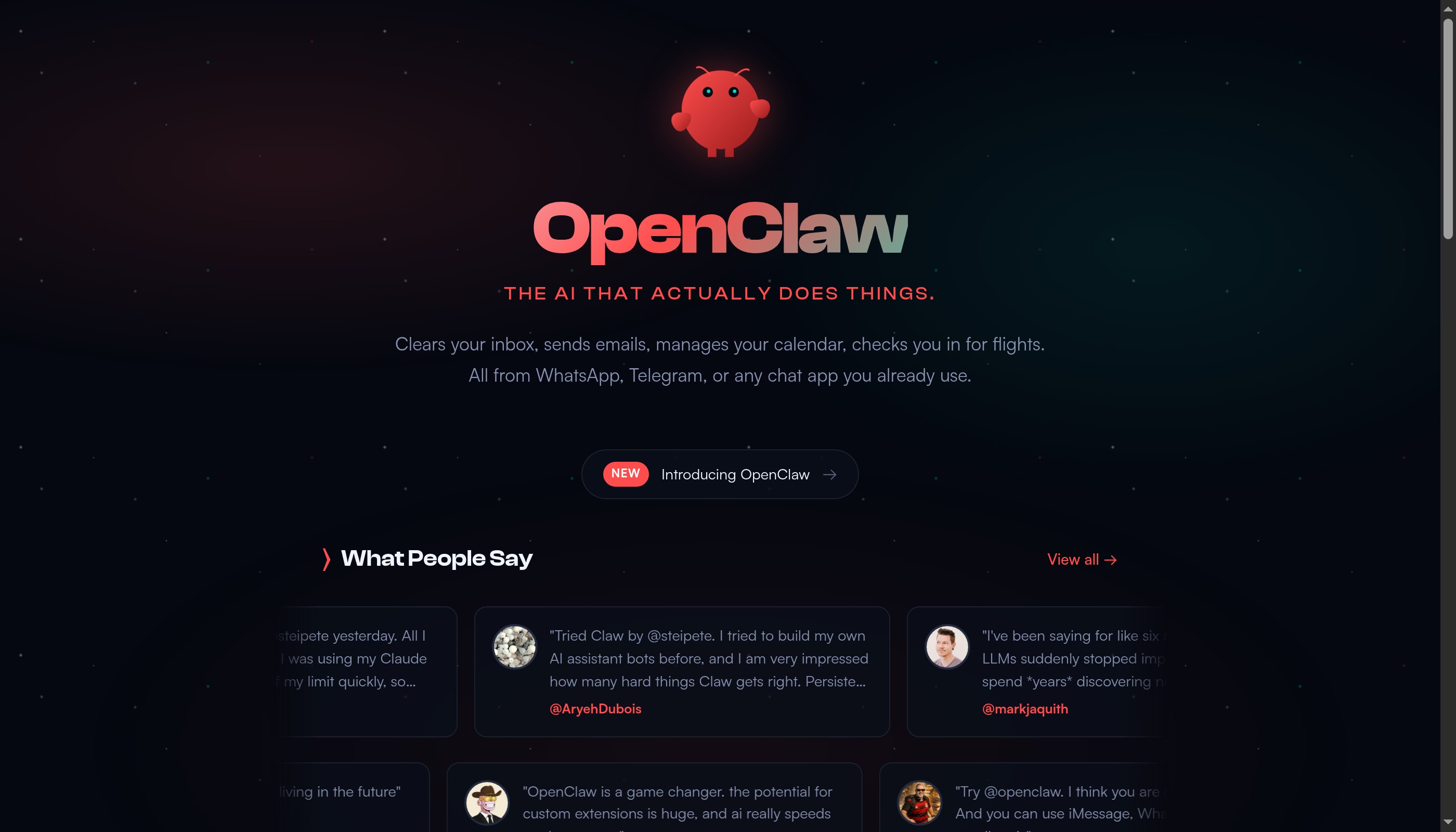

AI assistants face a fundamental challenge: language models are stateless. Every conversation starts fresh unless you implement persistent memory. OpenClaw solves this with a sophisticated file-based memory system that gives your AI assistant continuity, context awareness, and long-term knowledge retention.

Understanding how OpenClaw manages memory is crucial for building reliable AI agents. This guide explores the architecture, file structure, best practices, and advanced techniques for maintaining context across sessions while avoiding the dreaded context window overflow.

The Stateless AI Problem

Without memory, AI assistants forget everything between sessions

Without memory, AI assistants forget everything between sessions

Language models like Claude have no inherent memory between API calls. Each request is independent—the model receives only the immediate prompt without awareness of previous conversations or learned preferences.

Traditional chatbots solve this by sending entire conversation history with every request. This works for short exchanges but breaks down quickly. A week of daily interactions can exceed context limits, causing errors or forcing history truncation.

OpenClaw implements persistent, file-based memory that grows indefinitely while keeping active context manageable. Your AI assistant can reference months of history without loading everything into the context window simultaneously.

How OpenClaw Memory Works

Multi-layer memory system balancing persistence and performance

Multi-layer memory system balancing persistence and performance

OpenClaw uses a three-tier memory architecture: session context (immediate), daily memory (recent), and long-term memory (curated). Each tier serves different purposes and loads at different times.

Session context exists only during active conversation. It includes the current chat history, tool call results, and temporary working memory. This clears when the session ends.

Daily memory files (memory/YYYY-MM-DD.md) capture events, decisions, and learnings from each day. The AI assistant loads today's and yesterday's files automatically, providing recent context without overwhelming the window.

Long-term memory (MEMORY.md) stores curated knowledge—important facts, preferences, lessons learned, and significant events. This file is loaded only in main sessions (direct user interaction), not in shared contexts or subagents.

Step 1: Understanding File Structure

Organized memory files enable efficient context management

Organized memory files enable efficient context management

OpenClaw workspace contains several memory-related files with specific purposes:

~/.openclaw/workspace/

├── AGENTS.md # Your instructions and rules

├── SOUL.md # Personality and identity

├── USER.md # Information about your human

├── MEMORY.md # Long-term curated memories

├── TOOLS.md # Local tool configurations

├── memory/

│ ├── 2026-02-25.md # Today's raw activity log

│ ├── 2026-02-24.md # Yesterday's log

│ └── ... # Historical daily logs

└── HEARTBEAT.md # Periodic task checklist

Each file serves distinct needs. AGENTS.md provides operational rules, SOUL.md defines personality, and memory files track experiences. Never merge these—separation maintains clarity.

The memory/ directory grows continuously but individual files stay manageable. Daily logs average 2-10KB each, allowing years of history without storage concerns.

Step 2: Writing Effective Daily Logs

Daily logs capture raw events with enough detail for future recall

Daily logs capture raw events with enough detail for future recall

Daily memory files should be chronological, factual, and detailed enough for future reference. Think of them as a journal entry rather than a summary.

Good daily log entry:

## 2026-02-25 09:30 - Email Campaign Setup

Created automated email sequence for product launch. Used template from /templates/launch-email.md. Scheduled 3 emails over 5 days via Mailchimp API. Confirmed test send to staging list.

Key decision: Delayed launch by 2 days due to inventory confirmation needed.

Bad daily log entry:

Worked on emails. Sent some stuff.

Include context for decisions, outcomes of actions, and references to relevant files or resources. Future-you (or future AI sessions) should understand what happened and why.

For memory best practices, see our memory files guide and context management tutorial.

Step 3: Curating Long-Term Memory

Regular memory curation transforms raw logs into lasting knowledge

Regular memory curation transforms raw logs into lasting knowledge

MEMORY.md contains distilled wisdom—patterns, preferences, and important facts worth remembering long-term. Not everything from daily logs belongs here.

Review daily logs weekly or monthly and extract significant items:

- User preferences: "Prefers Sonnet over Opus for drafts"

- Learned patterns: "Monday mornings require extra context in reports"

- Important relationships: "Works closely with Sarah (sarah@company.com) on design"

- Recurring mistakes: "Always verify timezone when scheduling—user is UTC+1"

Remove outdated information aggressively. Long-term memory should stay under 10KB to load efficiently. Archive detailed history to daily logs rather than accumulating everything.

Treat MEMORY.md like a human's long-term memory: it stores concepts, patterns, and significant events, not exhaustive details of every action.

Step 4: Managing Context Window Limits

Proactive management prevents context overflow crashes

Proactive management prevents context overflow crashes

Claude's context window is large but finite. A typical session consuming 50% context can continue safely, but 75%+ requires immediate action to prevent overflow.

Monitor context usage through OpenClaw's status indicators. When approaching limits:

- Summarize current conversation to daily memory

- Clear session history with

/clearor/compact - Continue with fresh context, referencing saved summaries as needed

For complex multi-step tasks, use subagent delegation instead of keeping everything in one session. Each subagent gets isolated context, preventing overflow in the main session.

Prevention strategies:

- Use Haiku for simple tasks (smaller context footprint)

- Read large files in chunks rather than all at once

- Delegate browser work to subagents (screenshots consume massive context)

- Summarize and clear periodically during long sessions

Our context window management guide covers advanced techniques for context optimization.

Step 5: Implementing Memory Hygiene

Regular maintenance keeps memory system efficient and useful

Regular maintenance keeps memory system efficient and useful

Daily: Write to today's memory file (memory/YYYY-MM-DD.md) for significant events, decisions, or learnings. This happens automatically for important interactions but you can manually add entries.

Weekly: Review the past week's daily logs and update MEMORY.md with patterns or insights worth remembering long-term. Archive anything that's no longer relevant.

Monthly: Audit AGENTS.md and SOUL.md for outdated rules. Remove instructions that no longer apply or caused confusion. Memory files guide behavior—keep them current.

As needed: Use heartbeat sessions for background memory maintenance. Your AI assistant can review files, organize notes, and update documentation during idle time without human intervention.

Set up automated memory review with a cron job:

openclaw cron add "0 20 * * 0" "Review this week's daily memory files, update MEMORY.md with significant insights, remove outdated information"

Advanced Memory Patterns

Advanced techniques for specialized memory needs

Advanced techniques for specialized memory needs

Project-specific memory: Create separate memory files for major projects: memory/project-website-redesign.md. Reference these in main MEMORY.md with links rather than duplicating content.

Versioned memory: Track memory changes with git to review how understanding evolved over time. Commit memory files after significant updates to create recoverable checkpoints.

Contextual loading: Load memory selectively based on conversation context. Not every session needs full memory—subagents executing specific tasks need only relevant information.

Memory sharing: For team environments, separate private memory (MEMORY.md) from shared knowledge (TEAM-KNOWLEDGE.md). Private memory stays out of shared sessions for privacy.

Structured memory: Use YAML frontmatter in memory files for queryable metadata:

---

category: client-preferences

client: Acme Corp

last-updated: 2026-02-25

---

Learn more about advanced AI agent patterns in our multi-agent workflows guide.

Memory Security and Privacy

Protect sensitive information in your memory system

Protect sensitive information in your memory system

Memory files contain potentially sensitive information about you, your work, and your preferences. Implement appropriate security measures:

File permissions: Ensure memory directory is readable only by your user account. Default OpenClaw installations set this correctly, but verify with ls -la ~/.openclaw/workspace/memory/.

Shared contexts: MEMORY.md loads only in main sessions, not Discord, group chats, or shared environments. Never move private information to files that load in all contexts.

Sensitive data: Avoid storing passwords, API keys, or personal identifiers in memory. Use TOOLS.md for credentials (also private) or secure credential management systems.

Retention policies: Delete daily logs older than necessary (1-2 years for most use cases). Historical memory has diminishing value and increases risk exposure.

For multi-user environments, see our AI assistant deployment guide for proper access controls.

Troubleshooting Memory Issues

Common memory problems and their solutions

Common memory problems and their solutions

Context overflow despite clearing memory: Large files loaded from AGENTS.md or SOUL.md can consume significant context. Keep these under 10KB combined. Use file references instead of embedding full documents.

AI doesn't remember recent events: Check that daily memory file exists and has correct date format. Verify AGENTS.md includes instructions to read today's and yesterday's memory files.

Inconsistent memory across sessions: Multiple OpenClaw instances or concurrent sessions can create conflicting memory. Use a single primary instance for main interactions; designate others as read-only workers.

Memory files growing too large: Daily logs should rarely exceed 50KB. If larger, you're logging too verbosely. Focus on decisions and outcomes, not play-by-play transcripts.

Reference our OpenClaw setup guide for configuration troubleshooting.

Conclusion

Effective memory transforms AI assistants from tools into collaborative partners

Effective memory transforms AI assistants from tools into collaborative partners

OpenClaw's memory system gives your AI assistant the continuity and context awareness necessary for genuine usefulness. Daily logs provide recent history, long-term memory stores distilled knowledge, and proper context management prevents technical failures.

The key is regular curation: write meaningful daily logs, extract insights to long-term memory, and remove outdated information. Treat memory files as living documentation that evolves with your needs.

With well-maintained memory, your AI assistant becomes a true collaborative partner—one that learns from experience, remembers your preferences, and provides increasingly relevant assistance over time.

Ready to explore more? Check out our guides on building custom skills, AI workflow automation, and advanced prompt engineering.

More Articles

The Ultimate OpenClaw AWS Setup Guide

The definitive guide to setting up OpenClaw on AWS. Includes spot instance configuration, cost optimization, and step-by-step instructions.

Building AI Workflows with Tool Chaining in OpenClaw

Master the art of chaining tools and function calls to build powerful multi-step AI automation workflows—from data extraction to content generation and deployment.

Cost Optimization Guide for Self-Hosted AI Assistants: Run Claude on a Budget

Practical strategies to reduce API costs for self-hosted AI assistants—smart model routing, caching, batching, and OpenClaw-specific optimizations to run Claude affordably.