Advanced Claude Prompt Engineering: Few-Shot Learning & Chain-of-Thought

Prompt engineering determines the quality of AI outputs. While basic prompts get basic results, advanced techniques like few-shot learning, chain-of-thought reasoning, and structured prompting unlock Claude's full potential. This guide covers professional-grade prompt engineering strategies that produce consistent, high-quality results for complex tasks.

These techniques matter because they reduce trial-and-error, minimize hallucinations, and help Claude understand nuanced requirements. Instead of repeatedly refining vague prompts, you'll design precise instructions that work the first time.

Few-Shot Learning: Teaching by Example

Few-shot learning provides examples that demonstrate the exact output format you want

Few-shot learning provides examples that demonstrate the exact output format you want

Few-shot learning means showing Claude examples of the task before asking it to perform. Instead of describing what you want in abstract terms, you provide 2-5 concrete examples that demonstrate the pattern.

This technique works because Claude learns from examples in context—no fine-tuning required. The model extracts the underlying pattern from your examples and applies it to new inputs. Few-shot learning is especially effective for tasks with specific formats, unusual structures, or domain-specific knowledge.

Zero-shot (no examples):

Extract the key points from this article.

Few-shot (with examples):

Extract key points from articles in this format:

Example 1:

Article: "The company announced record profits of $2.5B in Q4..."

Key Points:

- Revenue: $2.5B (Q4 record)

- Growth: 45% YoY

- Driver: Enterprise sales expansion

Example 2:

Article: "New legislation will require companies to disclose..."

Key Points:

- Change: Mandatory disclosure requirements

- Effective: January 2027

- Impact: Increased compliance costs

Now extract key points from this article:

[Your article here]

The few-shot version produces consistently formatted results that match your examples' structure and level of detail.

Step 1: Choose the Right Number of Examples

More examples aren't always better—find the minimum that establishes the pattern

More examples aren't always better—find the minimum that establishes the pattern

How many examples should you provide? Start with 2-3 and add more only if results are inconsistent. Too many examples waste context window space; too few might not establish a clear pattern.

Use 2-3 examples when:

- The task has a clear, simple pattern

- Output format is straightforward

- You're working with common domains

Use 4-6 examples when:

- The task requires nuanced judgment

- You need to cover edge cases

- The pattern has multiple variations

Use 7+ examples when:

- The task is highly specialized

- You're defining complex classification schemes

- Examples need to cover many categories

Test your prompts with different example counts. Often 3 examples perform as well as 10 but use less context.

Step 2: Structure Examples for Maximum Clarity

Use consistent formatting and clear delimiters between examples

Use consistent formatting and clear delimiters between examples

Format examples with clear boundaries and consistent structure. Use XML tags, markdown headers, or explicit numbering to separate examples:

Good structure:

EXAMPLES:

Example 1:

Input: Customer complaint: "My order never arrived"

Output:

Category: Shipping Issue

Priority: High

Suggested Action: Track package, offer replacement

Tone: Apologetic, solution-focused

Example 2:

Input: Customer complaint: "The product color doesn't match"

Output:

Category: Product Mismatch

Priority: Medium

Suggested Action: Offer return/exchange

Tone: Understanding, helpful

Now categorize this complaint:

Input: [New complaint]

This structure eliminates ambiguity about where examples end and the actual task begins.

Step 3: Use Chain-of-Thought Reasoning

Chain-of-thought prompting asks Claude to show its work before giving an answer

Chain-of-thought prompting asks Claude to show its work before giving an answer

Chain-of-thought (CoT) prompting improves accuracy on complex reasoning tasks by asking Claude to think step-by-step before answering. Instead of jumping straight to conclusions, the model articulates its reasoning process.

Without CoT:

Is this SQL query optimized?

SELECT * FROM users WHERE email LIKE '%@gmail.com'

With CoT:

Analyze this SQL query's performance. Think through:

1. What indexes would help?

2. What are the performance bottlenecks?

3. How would you optimize it?

4. What's the expected improvement?

Then provide your recommendation.

Query: SELECT * FROM users WHERE email LIKE '%@gmail.com'

The CoT version catches more issues because Claude reasons through multiple optimization angles instead of pattern-matching to a quick answer.

Step 4: Implement Zero-Shot Chain-of-Thought

Adding "Let's think step by step" activates chain-of-thought reasoning

Adding "Let's think step by step" activates chain-of-thought reasoning

The simplest CoT technique is zero-shot CoT: just add "Let's think step by step" or "Explain your reasoning" to your prompt. This triggers more deliberate reasoning without providing examples.

Basic prompt:

Should we invest in Project A or Project B?

Project A: 20% ROI, 2-year payback, high risk

Project B: 12% ROI, 1-year payback, low risk

Zero-shot CoT:

Should we invest in Project A or Project B? Let's think step by step about:

- Financial returns

- Risk tolerance

- Time horizon

- Strategic fit

Project A: 20% ROI, 2-year payback, high risk

Project B: 12% ROI, 1-year payback, low risk

The second version produces more thorough analysis because Claude explicitly considers multiple decision factors.

Step 5: Combine Few-Shot and Chain-of-Thought

The most powerful technique combines examples with reasoning steps

The most powerful technique combines examples with reasoning steps

The most effective advanced prompting combines few-shot examples with chain-of-thought reasoning. Your examples demonstrate both the reasoning process and the final answer:

EXAMPLES:

Example:

Input: Bug report: "App crashes when uploading files > 100MB"

Reasoning:

1. Root cause: Likely memory issue or timeout

2. Severity: High - affects core functionality

3. User impact: Moderate - affects users with large files

4. Priority: Should fix in next sprint

5. Workaround available: Yes - compress files first

Output:

Priority: P1

Assign to: Backend team

Sprint: Next (2026-03-01)

Workaround: Document file compression in help docs

Now analyze this bug:

Input: [New bug report]

This teaches Claude both how to think about the problem and what the output should look like.

Step 6: Use Prompt Chaining for Complex Workflows

Break complex tasks into a series of focused prompts

Break complex tasks into a series of focused prompts

Prompt chaining breaks complex tasks into multiple sequential prompts where each step's output feeds into the next. This produces better results than asking Claude to do everything in one massive prompt.

Example: Blog post generation

Step 1 - Research:

Find the top 5 pain points for [topic] based on this data.

Output as a numbered list.

Step 2 - Outline:

Create a blog post outline addressing these pain points:

[Output from Step 1]

Step 3 - Write:

Write the introduction section based on this outline:

[Output from Step 2]

Each step has a focused objective, making outputs more reliable. You can also swap models between steps—use Opus for creative ideation, Sonnet for structured writing.

Step 7: Apply Structured Output Formatting

Define exact output schemas to get consistent, parseable results

Define exact output schemas to get consistent, parseable results

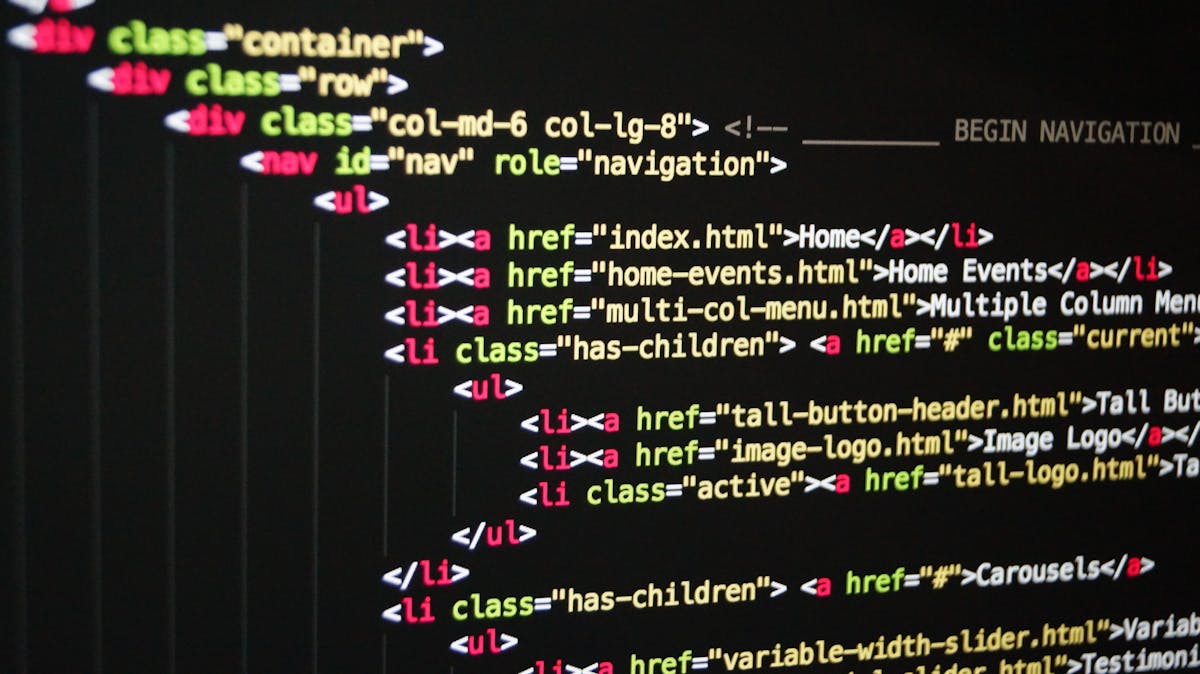

For outputs that need to be parsed by code, use structured output formats with explicit schemas. Define the expected JSON structure in your prompt:

Extract meeting information into this JSON format:

{

"date": "YYYY-MM-DD",

"attendees": ["name1", "name2"],

"decisions": [

{"decision": "text", "owner": "name", "deadline": "YYYY-MM-DD"}

],

"action_items": [

{"task": "text", "assignee": "name", "priority": "high|medium|low"}

]

}

Meeting transcript:

[Transcript here]

Return ONLY valid JSON with no explanation.

This produces machine-parseable output every time. For more on this technique, see our guide on structured output with Claude.

Step 8: Use Role Prompting for Specialized Tasks

Assign Claude a specific role or expertise to improve domain-specific responses

Assign Claude a specific role or expertise to improve domain-specific responses

Role prompting sets context by assigning Claude a persona with specific expertise. This focuses responses on relevant knowledge and appropriate tone:

You are a senior DevOps engineer with 10 years of Kubernetes experience.

A junior developer asks: "Should we use StatefulSets or Deployments for our database?"

Explain the tradeoffs in a mentoring tone. Include:

- Technical differences

- When to use each

- Common pitfalls

- Your recommendation with reasoning

Role prompting works because it activates relevant training data and sets expectations for depth and style. Use it for technical explanations, domain-specific analysis, or tasks requiring particular perspectives.

Step 9: Handle Ambiguity with Constraint Prompting

Define constraints and edge case handling to eliminate ambiguity

Define constraints and edge case handling to eliminate ambiguity

Ambiguous prompts produce inconsistent results. Constraint prompting explicitly defines rules, boundaries, and edge case behavior:

Ambiguous:

Categorize these customer support tickets.

Constrained:

Categorize customer support tickets using these rules:

Categories:

- Technical: Software bugs, API errors, integration issues

- Billing: Payments, invoices, subscription changes

- Feature Request: New capabilities, enhancements

- Other: Anything that doesn't fit above categories

Edge cases:

- If a ticket covers multiple categories, choose the PRIMARY issue

- If completely unclear, mark as "Other" and flag for human review

- If spam/abuse, mark as "Invalid"

Return: {"category": "X", "confidence": "high|medium|low", "flag_for_review": true|false}

The constrained version handles edge cases consistently and provides confidence indicators for downstream processing.

Step 10: Optimize for Context Window Usage

Balance between examples, instructions, and actual task content

Balance between examples, instructions, and actual task content

Advanced prompts can consume significant context. Optimize by:

Compress examples: Remove redundant text, use abbreviations in consistent ways Reuse structure: Define formats once, reference them in subsequent examples Prioritize recent examples: Place most relevant examples last (recency bias) Use prompt templates: Store common patterns, inject only variable parts

For very long contexts, consider using OpenClaw's prompt chaining features to break work into smaller chunks.

Advanced: Meta-Prompting for Self-Improvement

Use Claude to analyze and improve your prompts

Use Claude to analyze and improve your prompts

Meta-prompting uses Claude to improve prompts. Ask Claude to analyze your prompt and suggest improvements:

Review this prompt and suggest improvements:

[Your current prompt]

Consider:

- Clarity of instructions

- Whether examples are needed

- Missing edge cases

- Potential ambiguities

- Structure and formatting

Provide the improved version.

This technique helps you iterate on prompts faster. Claude can spot ambiguities you missed and suggest better example selection.

Conclusion

Master these techniques to consistently get high-quality outputs from Claude

Master these techniques to consistently get high-quality outputs from Claude

Advanced prompt engineering transforms Claude from a helpful assistant into a reliable professional tool. Few-shot learning establishes patterns, chain-of-thought reasoning improves accuracy, and prompt chaining handles complex workflows. Combined with structured outputs and clear constraints, these techniques produce consistent, high-quality results.

Start by applying one technique to your most common Claude tasks. Test variations, measure results, and refine. Build a library of proven prompts for repeated use. Over time, you'll develop intuition for which techniques work best for different task types.

For more Claude optimization strategies, explore our guides on Claude system prompts, Claude API best practices, and building AI workflows. The Anthropic prompt engineering documentation provides additional examples and research-backed techniques.

Ready to level up your prompts? Start with few-shot learning on your most repetitive tasks—that's where you'll see immediate impact. Then experiment with chain-of-thought for complex reasoning and prompt chaining for multi-step workflows.

More Articles

The Ultimate OpenClaw AWS Setup Guide

The definitive guide to setting up OpenClaw on AWS. Includes spot instance configuration, cost optimization, and step-by-step instructions.

Building AI Workflows with Tool Chaining in OpenClaw

Master the art of chaining tools and function calls to build powerful multi-step AI automation workflows—from data extraction to content generation and deployment.

Cost Optimization Guide for Self-Hosted AI Assistants: Run Claude on a Budget

Practical strategies to reduce API costs for self-hosted AI assistants—smart model routing, caching, batching, and OpenClaw-specific optimizations to run Claude affordably.