Claude API Python Tutorial: Build AI Apps with Anthropic's API

The Claude API gives you direct access to Anthropic's most capable AI models. Unlike ChatGPT's browser interface, the API lets you build custom applications, automate workflows, and integrate AI into your existing systems.

This tutorial covers everything you need to go from zero to production: authentication, basic messaging, streaming responses, tool use, and best practices for building reliable AI applications.

By the end, you'll have working Python code for common use cases and understand how to extend it for your specific needs.

Getting Started with the Claude API

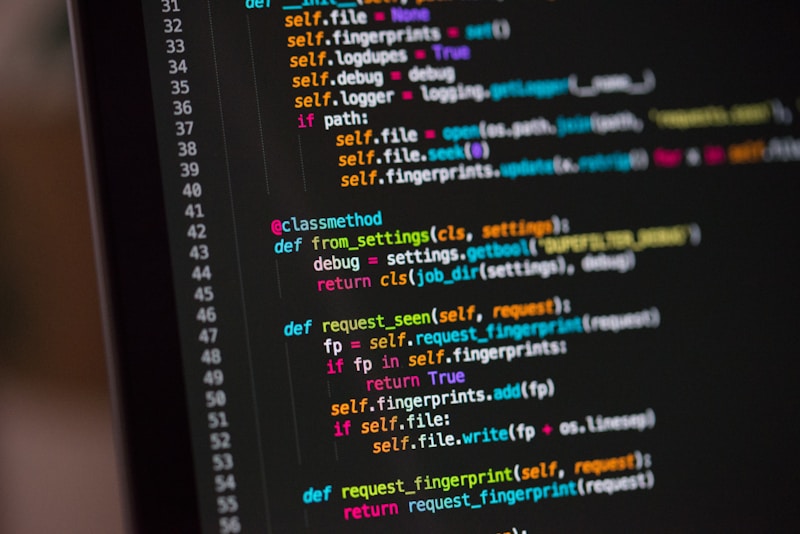

Setting up your Python environment for Claude API development

Setting up your Python environment for Claude API development

First, get your API key and set up your environment.

Get your API key:

- Create an account at console.anthropic.com

- Navigate to API Keys

- Create a new key and save it securely

Install the official SDK (PyPI package):

pip install anthropic

Set your API key:

export ANTHROPIC_API_KEY="sk-ant-..."

import os

os.environ["ANTHROPIC_API_KEY"] = "sk-ant-..."

Your first API call:

from anthropic import Anthropic

client = Anthropic()

message = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=1024,

messages=[

{"role": "user", "content": "Hello, Claude!"}

]

)

print(message.content[0].text)

That's it—you're now calling Claude directly from Python.

Understanding the Messages API

The Messages API is the core interface for Claude interactions

The Messages API is the core interface for Claude interactions

The Messages API handles all conversations with Claude. Here's the structure:

Basic message structure:

response = client.messages.create(

model="claude-sonnet-4-20250514", # Model selection

max_tokens=1024, # Maximum response length

system="You are a helpful coding assistant.", # System prompt

messages=[

{"role": "user", "content": "Explain Python decorators"},

{"role": "assistant", "content": "Decorators are..."},

{"role": "user", "content": "Show me an example"}

]

)

Available models (see Anthropic documentation for latest):

claude-opus-4-20250514- Most capable, best for complex tasksclaude-sonnet-4-20250514- Balanced performance and costclaude-haiku-3-20250514- Fastest and cheapest

Key parameters:

model- Which Claude version to usemax_tokens- Maximum response tokens (required)system- System prompt for behavior configurationmessages- Conversation historytemperature- Randomness (0-1, default varies by model)stop_sequences- Strings that stop generation

Handling the response:

response = client.messages.create(...)

text = response.content[0].text

print(f"Input tokens: {response.usage.input_tokens}")

print(f"Output tokens: {response.usage.output_tokens}")

print(f"Stop reason: {response.stop_reason}")

Streaming Responses

Stream responses for better UX in real-time applications

Stream responses for better UX in real-time applications

For long responses, streaming provides better user experience by showing text as it's generated.

Basic streaming:

with client.messages.stream(

model="claude-sonnet-4-20250514",

max_tokens=1024,

messages=[{"role": "user", "content": "Write a short story"}]

) as stream:

for text in stream.text_stream:

print(text, end="", flush=True)

Event-based streaming:

with client.messages.stream(

model="claude-sonnet-4-20250514",

max_tokens=1024,

messages=[{"role": "user", "content": "Explain machine learning"}]

) as stream:

for event in stream:

if event.type == "content_block_delta":

print(event.delta.text, end="")

elif event.type == "message_stop":

print("\n[Complete]")

Async streaming (for web apps):

import asyncio

from anthropic import AsyncAnthropic

async_client = AsyncAnthropic()

async def stream_response(prompt: str):

async with async_client.messages.stream(

model="claude-sonnet-4-20250514",

max_tokens=1024,

messages=[{"role": "user", "content": prompt}]

) as stream:

async for text in stream.text_stream:

yield text

async def main():

async for chunk in stream_response("Tell me a joke"):

print(chunk, end="")

asyncio.run(main())

Tool Use (Function Calling)

Give Claude the ability to call your functions

Give Claude the ability to call your functions

Tool use lets Claude call your Python functions to access external data or perform actions.

Define your tools:

tools = [

{

"name": "get_weather",

"description": "Get current weather for a location",

"input_schema": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "City and country, e.g. London, UK"

},

"unit": {

"type": "string",

"enum": ["celsius", "fahrenheit"],

"description": "Temperature unit"

}

},

"required": ["location"]

}

}

]

Handle tool calls:

def get_weather(location: str, unit: str = "celsius") -> dict:

# Your actual weather API call here

return {"temp": 22, "condition": "sunny", "location": location}

response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=1024,

tools=tools,

messages=[{"role": "user", "content": "What's the weather in Tokyo?"}]

)

if response.stop_reason == "tool_use":

tool_use = response.content[1] # Tool call is second content block

tool_name = tool_use.name

tool_input = tool_use.input

# Execute the function

if tool_name == "get_weather":

result = get_weather(**tool_input)

# Send result back to Claude

final_response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=1024,

tools=tools,

messages=[

{"role": "user", "content": "What's the weather in Tokyo?"},

{"role": "assistant", "content": response.content},

{

"role": "user",

"content": [

{

"type": "tool_result",

"tool_use_id": tool_use.id,

"content": str(result)

}

]

}

]

)

print(final_response.content[0].text)

Building a Reusable Client

Create a maintainable API wrapper for production use

Create a maintainable API wrapper for production use

For production applications, wrap the API in a reusable class:

from anthropic import Anthropic

from typing import Generator, Optional

import json

class ClaudeClient:

def __init__(

self,

model: str = "claude-sonnet-4-20250514",

system_prompt: Optional[str] = None

):

self.client = Anthropic()

self.model = model

self.system_prompt = system_prompt

self.conversation: list = []

def chat(self, message: str, max_tokens: int = 1024) -> str:

"""Send a message and get a response."""

self.conversation.append({"role": "user", "content": message})

response = self.client.messages.create(

model=self.model,

max_tokens=max_tokens,

system=self.system_prompt or "",

messages=self.conversation

)

assistant_message = response.content[0].text

self.conversation.append({

"role": "assistant",

"content": assistant_message

})

return assistant_message

def stream_chat(self, message: str) -> Generator[str, None, None]:

"""Stream a response for real-time display."""

self.conversation.append({"role": "user", "content": message})

full_response = ""

with self.client.messages.stream(

model=self.model,

max_tokens=1024,

system=self.system_prompt or "",

messages=self.conversation

) as stream:

for text in stream.text_stream:

full_response += text

yield text

self.conversation.append({

"role": "assistant",

"content": full_response

})

def clear_history(self):

"""Reset conversation history."""

self.conversation = []

claude = ClaudeClient(system_prompt="You are a Python expert.")

print(claude.chat("What's the best way to handle exceptions?"))

print(claude.chat("Show me an example")) # Has context from previous message

Error Handling and Rate Limits

Handle API errors gracefully in production

Handle API errors gracefully in production

Production applications need robust error handling:

from anthropic import (

Anthropic,

APIError,

RateLimitError,

APIConnectionError

)

import time

def call_with_retry(

client: Anthropic,

messages: list,

max_retries: int = 3

) -> str:

for attempt in range(max_retries):

try:

response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=1024,

messages=messages

)

return response.content[0].text

except RateLimitError as e:

wait_time = 2 ** attempt # Exponential backoff

print(f"Rate limited. Waiting {wait_time}s...")

time.sleep(wait_time)

except APIConnectionError as e:

print(f"Connection error: {e}")

time.sleep(1)

except APIError as e:

print(f"API error: {e}")

raise

raise Exception("Max retries exceeded")

Rate limit guidelines:

- Implement exponential backoff

- Cache responses when possible

- Use batch processing for bulk operations

- Monitor usage in the Anthropic console

Conclusion

You're ready to build production AI applications

You're ready to build production AI applications

The Claude API opens up possibilities beyond what's available in chat interfaces. You can now build custom AI applications, automate workflows, and integrate Claude into your existing systems.

Key takeaways:

- Use the Messages API for all interactions

- Stream responses for better UX

- Implement tools for external capabilities

- Handle errors with retry logic

- Build reusable clients for production

Continue learning:

- MCP Tutorial for advanced integrations

- OpenClaw setup for 24/7 AI assistants

- Self-hosted alternatives for privacy

Start with simple use cases and iterate. The API is flexible enough to support whatever you build.

FAQ

Common questions about the Claude API

Common questions about the Claude API

How much does the Claude API cost?

Pricing is per token. Claude Sonnet: $3/million input, $15/million output. Claude Haiku: $0.25/million input, $1.25/million output. Check anthropic.com/pricing for current rates.

What's the difference between Claude API and OpenClaw?

The Claude API is Anthropic's raw interface. OpenClaw adds persistence, messaging platform integration, tools, and memory on top of Claude (or other models). Use the API for custom apps, OpenClaw for personal assistant use cases.

Can I use Claude API for commercial projects?

Yes, the API is designed for commercial use. Review Anthropic's usage policies for specific guidelines.

What's the maximum context length?

Claude supports 200K tokens of context. For most applications, this is more than sufficient. Manage context carefully for cost efficiency.

How do I reduce API costs?

Use Haiku for simple tasks, cache responses, implement conversation summaries for long chats, and batch similar requests together.

More Articles

The Ultimate OpenClaw AWS Setup Guide

The definitive guide to setting up OpenClaw on AWS. Includes spot instance configuration, cost optimization, and step-by-step instructions.

Building AI Workflows with Tool Chaining in OpenClaw

Master the art of chaining tools and function calls to build powerful multi-step AI automation workflows—from data extraction to content generation and deployment.

Cost Optimization Guide for Self-Hosted AI Assistants: Run Claude on a Budget

Practical strategies to reduce API costs for self-hosted AI assistants—smart model routing, caching, batching, and OpenClaw-specific optimizations to run Claude affordably.