OpenClaw Memory Files: Give Your AI Assistant Persistent Memory

Every time you start a new chat with an AI assistant, you're starting from scratch. No memory of last week's project, no context about your preferences, no recollection of that decision you made on Tuesday. It's exhausting.

OpenClaw solves this with a simple but powerful memory file system. Your AI reads a set of files at the start of each session to rebuild context — and writes to those same files throughout the day to preserve what matters. This guide shows you exactly how it works and how to use it effectively.

How OpenClaw Memory Works

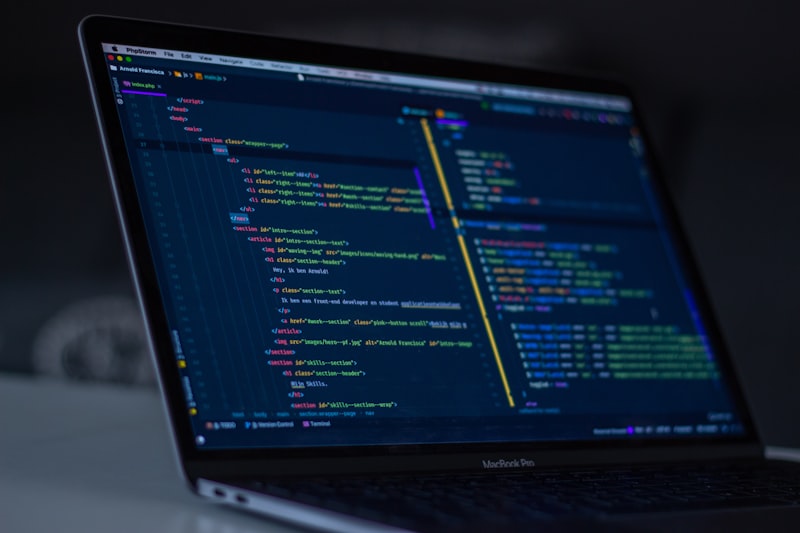

OpenClaw uses plain text files as persistent memory — readable, editable, and always under your control

OpenClaw uses plain text files as persistent memory — readable, editable, and always under your control

OpenClaw's memory system is built on a simple idea: files outlive sessions. While an AI's in-context memory evaporates when the chat ends, files on disk persist forever.

The system uses three layers:

SOUL.md— Your AI's identity, values, and core behaviorsMEMORY.md— Long-term curated memories (distilled from daily logs)memory/YYYY-MM-DD.md— Daily raw notes, decisions, and events

When a new session starts, OpenClaw reads these files before responding to your first message. The result: an AI that genuinely remembers your context, preferences, and history.

Step 1: Understand Your Memory Directory

Your workspace directory — memory files live alongside your project files

Your workspace directory — memory files live alongside your project files

Your OpenClaw workspace (typically ~/.openclaw/workspace/) is where all memory files live. Navigate there and list the contents:

ls ~/.openclaw/workspace/

You'll see files like SOUL.md, MEMORY.md, USER.md, and a memory/ folder. Each serves a distinct purpose:

SOUL.md— Don't edit this unless you're customizing your AI's personalityMEMORY.md— Your primary long-term memory store; edit freelyUSER.md— Information about you (name, preferences, context)memory/— Daily log files named by date

Start by reading MEMORY.md to understand what your AI already knows about your work. If it's empty or sparse, that's your baseline — you'll build it up over time.

Step 2: Write Your First Memory Entry

Memories are written in natural language — no special syntax required

Memories are written in natural language — no special syntax required

The simplest way to add a memory is to tell your AI directly:

"Remember that I prefer TypeScript over JavaScript for all new projects. Write this to MEMORY.md."

Your AI will immediately open MEMORY.md and add a structured entry. You can verify by reading the file:

cat ~/.openclaw/workspace/MEMORY.md

You can also add memories manually by editing the file directly. The format is flexible — OpenClaw reads natural language, not structured JSON. A typical MEMORY.md might look like:

## Work Context

- Primary projects: claw.ist (blog), automarck.com (SaaS)

- Hosting: AWS us-east-1, Ubuntu 24.04

- Prefer concise responses, no unnecessary caveats

## Preferences

- TypeScript over JavaScript

- Tailwind CSS for styling

- Git commits in imperative mood ("Add feature" not "Added feature")

## Key Decisions

- Feb 2026: Moved from single-agent to multi-agent architecture

- Using Claude Sonnet as default, Opus for complex reasoning tasks

Step 3: Use Daily Log Files

Daily logs capture raw events; MEMORY.md captures distilled wisdom

Daily logs capture raw events; MEMORY.md captures distilled wisdom

Daily logs in memory/YYYY-MM-DD.md capture everything that happens during the day — decisions made, problems solved, context established. These are raw notes, not curated summaries.

OpenClaw writes to these automatically during sessions. You can also write to them directly:

"Log this to today's memory file: Deployed new version of claw.ist, fixed image loading bug on mobile."

At the end of the day (or during a heartbeat), your AI reviews daily logs and updates MEMORY.md with anything worth keeping long-term. Think of it like a human reviewing their daily journal and updating their mental model.

To manually review recent logs:

cat ~/.openclaw/workspace/memory/$(date +%Y-%m-%d).md

cat ~/.openclaw/workspace/memory/$(date -d "yesterday" +%Y-%m-%d).md

Step 4: Trigger Memory Recall in Conversations

Memory is loaded at session start — but you can also ask for specific recalls

Memory is loaded at session start — but you can also ask for specific recalls

OpenClaw loads your core memory files at the start of every session. But you can also ask for specific recalls mid-conversation:

"Check memory for anything about the automarck.com deployment"

"What do we know about my database setup?"

"Read this week's daily logs and summarize what we accomplished"

The AI will read the relevant files and synthesize the context for you. This is especially useful when picking up a project after a few days away — instead of re-explaining everything, just ask for a memory recap.

Step 5: Prune and Maintain Your Memory

Regular memory maintenance keeps context sharp and reduces token overhead

Regular memory maintenance keeps context sharp and reduces token overhead

Memory files can grow large over time, which slows session startup and adds noise. Schedule a regular memory review — monthly works well:

- Read through

MEMORY.mdand flag anything outdated - Delete completed project notes

- Update preferences that have changed

- Archive old daily logs to a

memory/archive/folder

You can automate this with a cron job:

"Every Sunday at 9 AM, review the past week's memory logs and update MEMORY.md with anything worth keeping long-term."

See the OpenClaw cron jobs guide for how to set this up.

Memory Best Practices

Good memory hygiene makes your AI assistant dramatically more useful

Good memory hygiene makes your AI assistant dramatically more useful

After using OpenClaw's memory system for a while, a few patterns consistently work best:

Write decisions, not tasks. Tasks get done and forgotten — decisions shape future behavior. "Decided to use Vercel over Netlify because of edge functions" is more valuable than "deployed site to Vercel."

Include the why. "Prefer short responses" is helpful. "Prefer short responses because I read on mobile during commute" is better — it lets the AI adapt intelligently.

Date important context. Memory gets stale. "Using Node 18" means nothing six months from now without a date. "As of Feb 2026: using Node 22" lets the AI know to verify this is still current.

Don't over-engineer it. Plain text works great. You don't need special syntax, JSON schemas, or vector embeddings. The AI can read and reason about natural language just fine.

Connecting Memory to Other OpenClaw Features

Memory files are shared across agents — subagents can read and write to the same memory store

Memory files are shared across agents — subagents can read and write to the same memory store

One of the most powerful aspects of OpenClaw's memory system is that it's shared across the entire system. When you spawn a subagent for a complex task, it can read your memory files to understand context without you having to re-explain everything.

This is how the system achieves genuine continuity across:

- Main session ↔ subagent handoffs

- Cron jobs that run overnight

- Different AI models (Sonnet main, Opus for complex reasoning)

- Multiple projects running in parallel

The memory files act as shared state — a single source of truth that every part of your OpenClaw system can read from and write to.

Conclusion

Persistent memory transforms a stateless chatbot into a genuine long-term collaborator

Persistent memory transforms a stateless chatbot into a genuine long-term collaborator

OpenClaw's memory file system is one of its most underrated features. It takes five minutes to set up and pays dividends every single day — no more repeating yourself, no more re-establishing context, no more "as I mentioned last week" frustrations.

Start simple: just add a few key preferences and project notes to MEMORY.md. Let it grow organically as you use the system. Within a week, you'll wonder how you ever managed without it.

For more on OpenClaw's capabilities, see the complete CLI reference and the VPS setup guide.

Frequently Asked Questions

How much memory can OpenClaw hold? Technically, as much as fits in your context window — typically 100,000+ tokens. In practice, keep MEMORY.md under 5,000 words for fast session startup. Daily logs can be longer since they're only read when needed.

Are memory files private? Yes. They live on your server (or local machine) and are never sent anywhere except to the AI model during your sessions. The model itself doesn't retain them between sessions.

Can I have memory for different projects? Yes. You can structure MEMORY.md with project-specific sections, or create separate workspace directories for completely isolated projects.

What if the AI writes something wrong to memory? Just edit the file directly — it's plain text. You have full control over what's stored. The AI can also correct its own entries if you tell it what's wrong.

More Articles

The Ultimate OpenClaw AWS Setup Guide

The definitive guide to setting up OpenClaw on AWS. Includes spot instance configuration, cost optimization, and step-by-step instructions.

Building AI Workflows with Tool Chaining in OpenClaw

Master the art of chaining tools and function calls to build powerful multi-step AI automation workflows—from data extraction to content generation and deployment.

Cost Optimization Guide for Self-Hosted AI Assistants: Run Claude on a Budget

Practical strategies to reduce API costs for self-hosted AI assistants—smart model routing, caching, batching, and OpenClaw-specific optimizations to run Claude affordably.