Comparing Claude Models: Haiku vs Sonnet vs Opus for Different Tasks

Choosing the right Claude model impacts performance, cost, and result quality. Anthropic offers three tiers—Haiku, Sonnet, and Opus—each optimized for different use cases. Understanding their strengths helps you balance speed, intelligence, and budget effectively.

This guide compares all three models across key dimensions: speed, reasoning ability, coding capabilities, cost, and ideal use cases. You'll learn when to use each model and how to switch dynamically based on task requirements.

The Claude Model Hierarchy

Three tiers balancing speed, intelligence, and cost

Three tiers balancing speed, intelligence, and cost

Claude's model family follows a deliberate progression from fastest to most capable:

Haiku prioritizes speed and cost-efficiency. It processes requests in seconds, handles simple queries well, and costs significantly less than larger models. Think of it as your quick-response specialist.

Sonnet balances capability and performance. It handles complex reasoning, coding tasks, and nuanced questions while maintaining reasonable speed. This is the workhorse model for most applications.

Opus delivers maximum intelligence for the hardest problems. It excels at advanced reasoning, complex analysis, and tasks requiring deep understanding. Use it when quality matters more than cost or speed.

The jump from Haiku to Sonnet provides massive capability gains. Sonnet to Opus offers more subtle improvements but crucial for specific challenging scenarios.

Speed and Latency Comparison

Haiku responds 3-5x faster than Opus for typical queries

Haiku responds 3-5x faster than Opus for typical queries

Haiku generates responses in 1-3 seconds for short queries. This makes it ideal for real-time applications like chatbots, quick lookups, or simple automation where sub-second latency matters.

Sonnet typically responds in 3-8 seconds depending on query complexity. The extra processing time enables better reasoning without frustrating wait times for most interactive use cases.

Opus takes 8-15+ seconds for complex reasoning tasks. The model performs additional analysis steps, resulting in higher quality but longer waits. For non-interactive batch processing, this delay is acceptable.

Speed varies with prompt length, complexity, and API load. During peak hours, all models slow slightly. For latency-critical applications, always test with realistic workloads.

Our Claude API tutorial includes performance benchmarking code for accurate timing measurements.

Reasoning and Intelligence

Higher-tier models handle multi-step reasoning and subtle context better

Higher-tier models handle multi-step reasoning and subtle context better

Haiku handles straightforward tasks: simple Q&A, data formatting, basic classification, and template filling. It struggles with ambiguity, multi-step reasoning, or tasks requiring deep context understanding.

Example Haiku strengths:

- "Extract email addresses from this text"

- "Classify this support ticket as urgent/normal/low"

- "Summarize this paragraph in one sentence"

Sonnet excels at complex reasoning: code analysis, nuanced writing, strategic planning, and tasks requiring context awareness. It handles most real-world problems that don't require cutting-edge intelligence.

Example Sonnet strengths:

- "Review this code for security vulnerabilities and suggest fixes"

- "Analyze user feedback and identify the top 3 improvement priorities"

- "Generate a marketing strategy based on these customer interviews"

Opus tackles the hardest problems: advanced mathematics, complex code generation, subtle logical puzzles, and tasks requiring maximum intelligence. Use it when Sonnet produces unsatisfactory results.

Example Opus strengths:

- "Design a distributed system architecture handling 1M+ concurrent users"

- "Prove this mathematical theorem and explain edge cases"

- "Review this legal contract and identify potential liability issues"

For more on leveraging reasoning capabilities, see our advanced prompt engineering guide.

Coding Capabilities

All Claude models code, but quality varies significantly

All Claude models code, but quality varies significantly

Haiku writes basic scripts, fixes simple bugs, and explains code concepts. It's suitable for boilerplate generation, simple automation, and educational explanations. Don't rely on it for production code architecture.

Sonnet handles most coding tasks: feature implementation, debugging, refactoring, and framework integration. It understands complex codebases, suggests optimizations, and writes production-ready code for standard scenarios.

Opus excels at algorithmic challenges, system design, performance optimization, and unfamiliar frameworks. It produces more elegant solutions and catches subtle bugs that Sonnet might miss.

Real-world coding comparison:

- Haiku: "Write a function to sort an array" ✓

- Sonnet: "Implement OAuth2 authentication with refresh tokens" ✓

- Opus: "Design a lock-free concurrent queue with optimistic concurrency control" ✓

For practical coding applications, most developers find Sonnet sufficient. Reserve Opus for architecture decisions or particularly challenging algorithms.

Compare Claude with other coding assistants in our AI coding tools comparison.

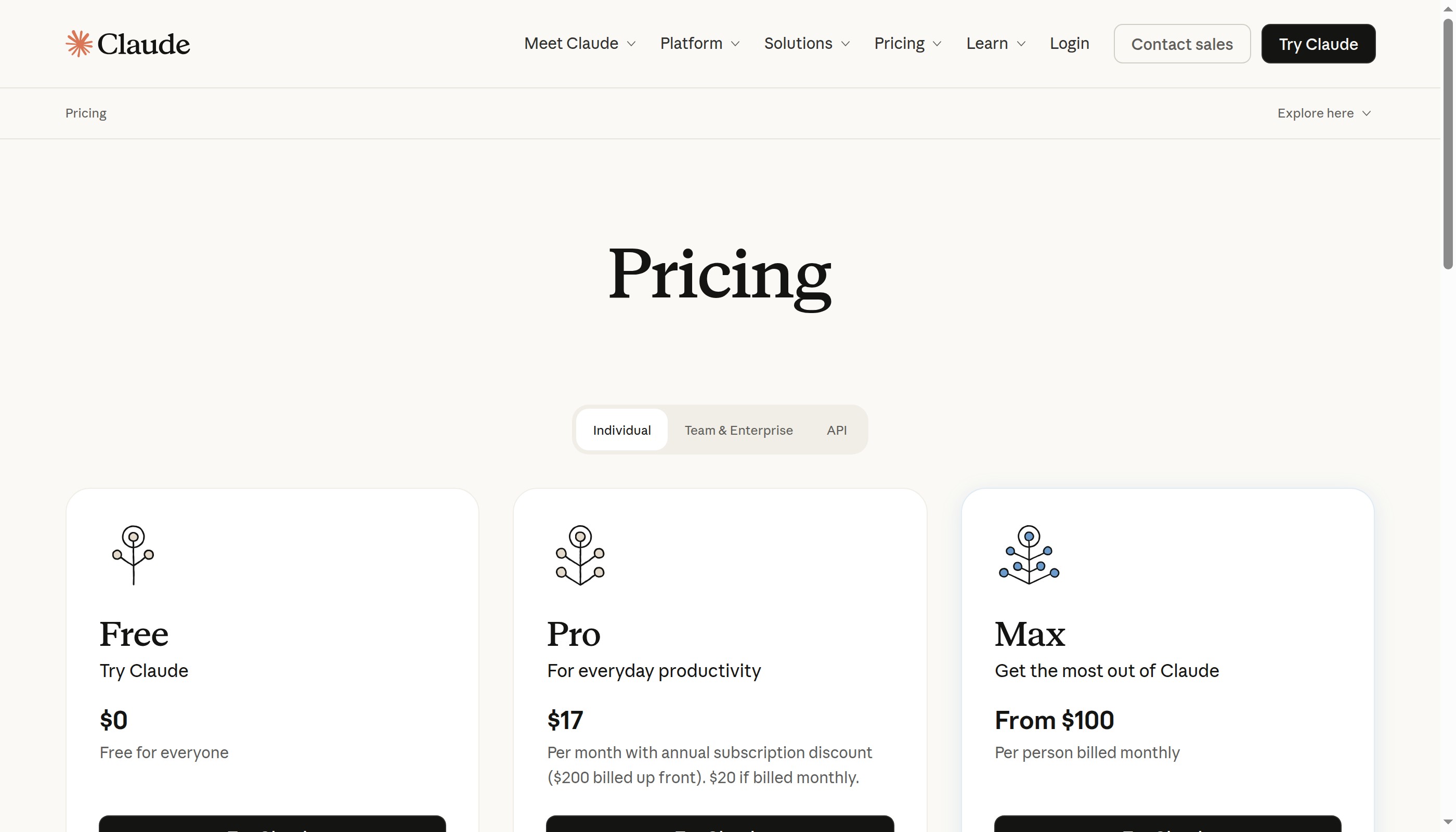

Cost Analysis

Balance capability needs with budget constraints

Balance capability needs with budget constraints

As of 2026, approximate pricing per million tokens (input/output):

- Haiku: $0.25 / $1.25

- Sonnet: $3.00 / $15.00

- Opus: $15.00 / $75.00

Sonnet costs roughly 12x more than Haiku but provides far more than 12x the value for complex tasks. Opus costs 5x more than Sonnet—worthwhile when quality is critical, wasteful for routine work.

Cost optimization strategies:

Use Haiku for high-volume, simple tasks like classification, extraction, or formatting. Route complex requests to Sonnet. Reserve Opus for tasks where Sonnet demonstrably fails or quality requirements justify the premium.

Implement dynamic model selection based on query complexity. Analyze the prompt first—simple questions go to Haiku, detailed analysis goes to Sonnet, expert-level reasoning goes to Opus.

A well-optimized system might use 70% Haiku, 25% Sonnet, 5% Opus, achieving similar results to all-Opus at a fraction of the cost.

Learn cost management techniques in our Claude API rate limiting guide.

Use Case Recommendations

Match models to tasks for optimal performance and cost

Match models to tasks for optimal performance and cost

Customer Support Chatbot:

- First response: Haiku (fast, cheap, handles 80% of queries)

- Complex issues: Escalate to Sonnet

- Escalations requiring judgment: Human handoff

Content Generation:

- Outlines and drafts: Sonnet

- Final polish and editing: Sonnet or Opus for premium content

- Bulk meta descriptions: Haiku

Code Review:

- Style checking and basic bugs: Sonnet

- Security audit and architecture review: Opus

- Simple linting: Haiku

Data Analysis:

- Descriptive statistics and summaries: Haiku

- Trend analysis and insights: Sonnet

- Predictive modeling and deep analysis: Opus

Email Management:

- Classification and routing: Haiku

- Draft responses: Sonnet

- Complex negotiations: Opus

Match model to task value. Don't use Opus to format CSV files or Haiku to architect systems.

Explore automation workflows in our AI workflow automation guide.

Switching Models Dynamically

Configure intelligent model routing for optimal results

Configure intelligent model routing for optimal results

OpenClaw supports dynamic model selection based on task requirements. Set your default model in configuration, then override for specific requests.

Configure default model:

openclaw config set model anthropic/claude-sonnet-4-5

Override for specific request:

openclaw chat --model anthropic/claude-opus-4 "Design a distributed caching system..."

Implement routing logic in your automation:

function selectModel(taskComplexity) {

if (taskComplexity === 'simple') return 'claude-haiku-4';

if (taskComplexity === 'moderate') return 'claude-sonnet-4-5';

return 'claude-opus-4';

}

Monitor which tasks succeed with which models, then adjust routing rules. Some users find 95% of work succeeds with Sonnet, using Opus only for the remaining 5%.

For implementation details, see our OpenClaw API integration guide.

Performance Tips for Each Model

Maximize results from each model tier

Maximize results from each model tier

Haiku optimization:

- Keep prompts under 500 tokens for best speed

- Use structured output formats (JSON) for consistency

- Provide clear examples rather than relying on inference

- Avoid ambiguity—be explicit about requirements

Sonnet optimization:

- Use system prompts to establish context efficiently

- Leverage chain-of-thought for complex reasoning

- Provide reference materials inline rather than asking model to recall

- Break very large tasks into smaller subtasks

Opus optimization:

- Invest time in comprehensive prompts—model rewards detail

- Use extended thinking mode for maximum capability

- Provide challenging tasks worthy of the model's intelligence

- Compare results between Sonnet and Opus to verify value

For all models, clear prompts yield better results than clever ones. State requirements explicitly and provide examples.

Advanced prompting techniques in our Claude prompt engineering guide.

When to Use Extended Thinking

Extended thinking mode enables deeper analysis for complex problems

Extended thinking mode enables deeper analysis for complex problems

Claude's extended thinking mode (available in Sonnet and Opus) allows the model to "think out loud" before responding, improving reasoning quality for complex tasks.

Enable extended thinking when:

- Task requires multi-step logical reasoning

- Problem has multiple valid approaches requiring comparison

- Answer quality matters more than response speed

- Question involves subtle trade-offs or edge cases

Skip extended thinking when:

- Speed is critical (chatbots, real-time apps)

- Query is straightforward and factual

- Using Haiku (not available in base tier)

- Generating high-volume simple responses

Extended thinking increases token usage (longer responses) but often produces significantly better results for complex queries. Test with and without to evaluate impact.

Learn more in our Claude extended thinking mode guide.

Conclusion

Choose the right Claude model for each task

Choose the right Claude model for each task

Haiku delivers speed and efficiency for simple tasks. Sonnet provides excellent capability for most real-world problems. Opus tackles the hardest challenges requiring maximum intelligence. The best strategy uses all three strategically.

Start with Sonnet as your default. If responses are fast enough and good enough, that's your answer. Drop to Haiku for high-volume simple tasks. Escalate to Opus when quality demands justify the cost.

Monitor usage patterns over time. You'll discover which tasks need which models, allowing increasingly sophisticated routing logic. The goal: maximum result quality per dollar spent.

Ready to implement? Check out our Claude API Python tutorial, OpenClaw setup guide, and AI assistant deployment guide to build your intelligent system.

More Articles

The Ultimate OpenClaw AWS Setup Guide

The definitive guide to setting up OpenClaw on AWS. Includes spot instance configuration, cost optimization, and step-by-step instructions.

Building AI Workflows with Tool Chaining in OpenClaw

Master the art of chaining tools and function calls to build powerful multi-step AI automation workflows—from data extraction to content generation and deployment.

Cost Optimization Guide for Self-Hosted AI Assistants: Run Claude on a Budget

Practical strategies to reduce API costs for self-hosted AI assistants—smart model routing, caching, batching, and OpenClaw-specific optimizations to run Claude affordably.