OpenClaw Browser Automation: Scrape, Click, and Control the Web with AI

Most AI assistants can talk about the web. OpenClaw can actually use it.

The built-in browser tool gives Claude direct control over a Chromium browser — navigate pages, take screenshots, click buttons, fill forms, extract data, and interact with any website just by describing what you want. No Playwright scripts. No Selenium boilerplate. Just natural language commands backed by a real browser engine.

This tutorial walks through the browser tool's core capabilities and shows you how to put them to work in real automation workflows.

What the OpenClaw Browser Tool Can Do

OpenClaw's browser tool provides AI-driven control over a full Chromium browser instance

OpenClaw's browser tool provides AI-driven control over a full Chromium browser instance

The browser tool supports a range of actions that mirror what a human does when browsing the web:

- Navigate to any URL

- Snapshot the page — captures the accessibility tree for AI to read and interact with

- Screenshot — captures a visual image of the current state

- Act — perform clicks, typing, form fills, and keyboard shortcuts

- Console — read JavaScript console output for debugging

- Dialog — accept or dismiss browser alerts

What makes it powerful is the combination of snapshot + act. The snapshot gives Claude a structured view of every clickable element, input field, and piece of text on the page. From that, it can precisely target any element without needing CSS selectors or XPath.

Step 1: Enable the Browser Tool in Your Session

Browser capabilities are available by default in OpenClaw sessions with the appropriate profile

Browser capabilities are available by default in OpenClaw sessions with the appropriate profile

The browser tool is available in OpenClaw by default — no installation needed. To use it, simply ask Claude to open a browser or navigate to a URL in your chat session.

OpenClaw supports two browser profiles:

profile="openclaw"— An isolated browser instance managed by OpenClaw. Best for automation tasks, web scraping, and anything where you want a clean, controlled environment.profile="chrome"— Attaches to your existing Chrome browser via the OpenClaw Browser Relay extension. Best when you need to act on a tab you're already viewing.

For automation workflows, use the openclaw profile. For interactive assistance while you browse, use the chrome profile.

The browser runs headless by default on servers, meaning no visible window — just the engine. On desktop installs, you can watch the browser in action.

Step 2: Navigate and Capture Page Content

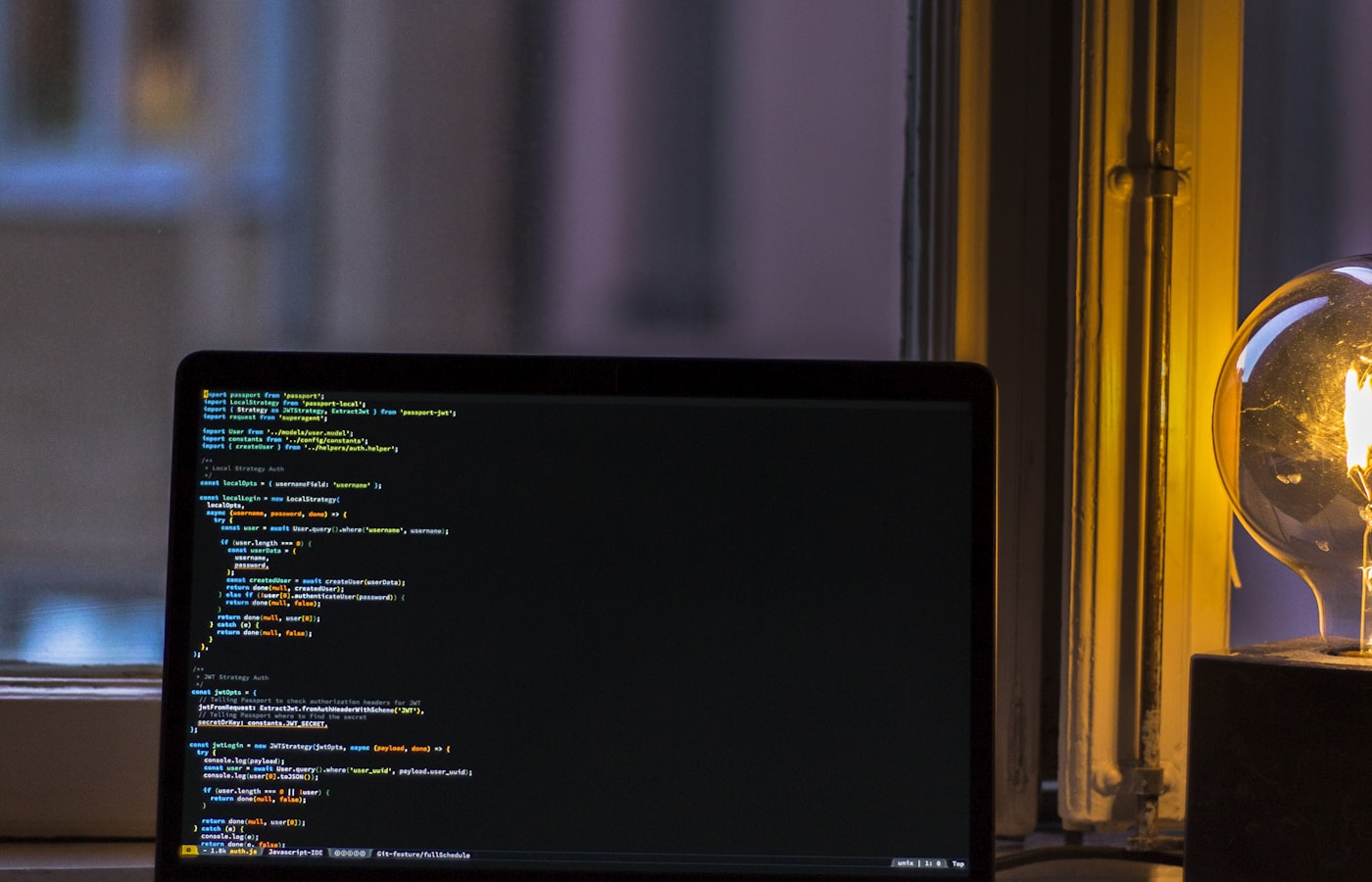

Extracted web data flows directly into your AI workflow for processing and analysis

Extracted web data flows directly into your AI workflow for processing and analysis

The most common use case is reading a web page. Ask OpenClaw to fetch a URL and summarize, extract, or analyze the content:

"Go to https://example.com/pricing and tell me what the Pro plan includes."

Behind the scenes, OpenClaw navigates to the URL, takes a snapshot of the accessibility tree, reads all the text and structure, and answers your question — all without you having to copy-paste anything.

For data extraction at scale, you can chain multiple navigations:

"Visit each URL in this list, extract the product name and price from each page, and give me a CSV."

OpenClaw handles the navigation loop, extraction, and formatting automatically.

Pro tip: For pages that require JavaScript to render (SPAs, dynamic content), the snapshot action waits for the DOM to settle before reading. This handles most React and Vue apps cleanly.

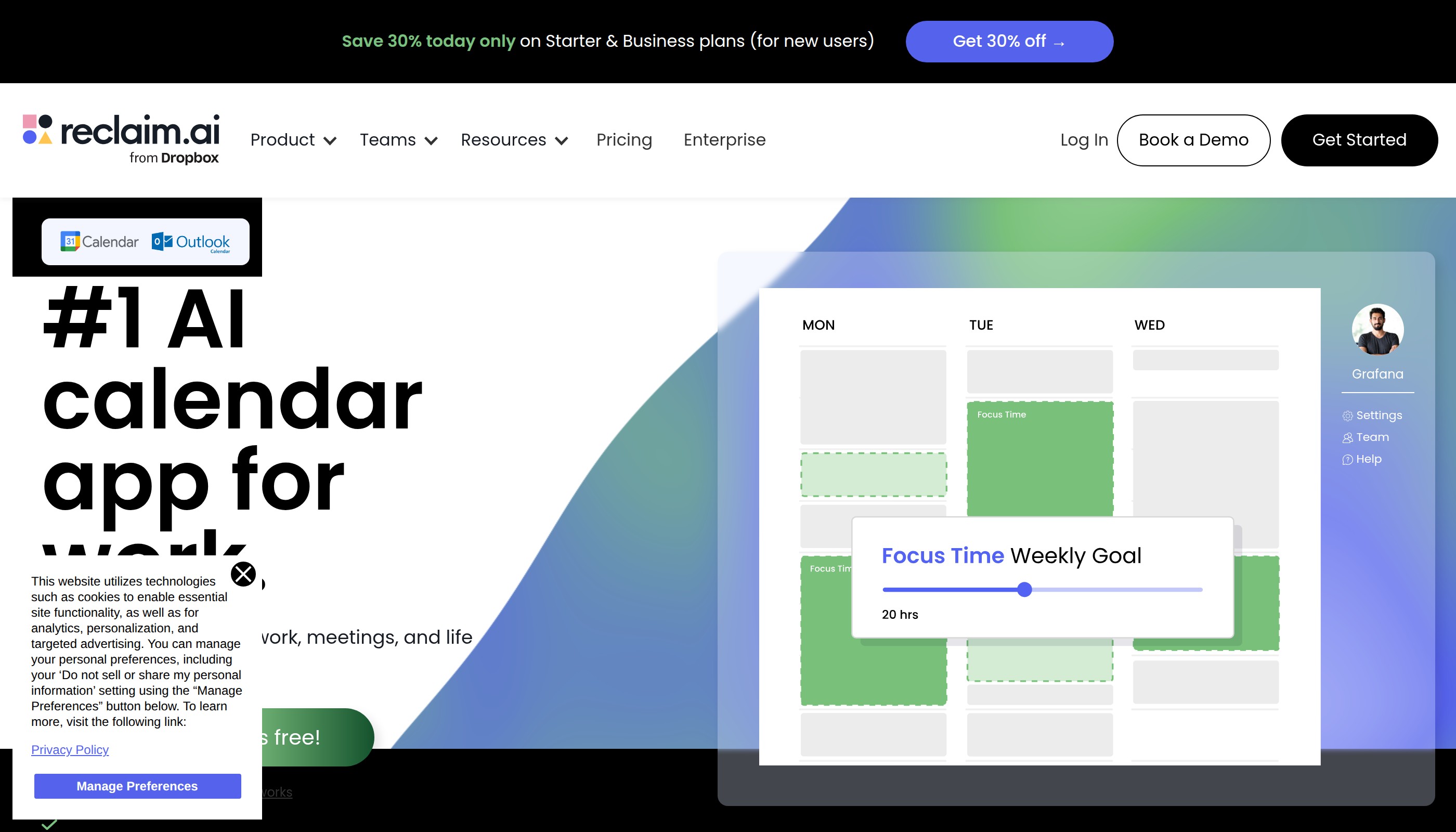

Step 3: Automate Form Filling and Clicks

Browser automation enables end-to-end workflows including login, form submission, and data entry

Browser automation enables end-to-end workflows including login, form submission, and data entry

The act action is where things get really useful. You can instruct OpenClaw to:

- Click buttons and links by their label

- Type into input fields

- Select dropdown values

- Scroll to specific sections

- Submit forms

A real example: automating a login flow.

"Go to app.example.com/login. Type my username and password into the login form and click Sign In."

OpenClaw takes a snapshot to find the username field, password field, and Sign In button — then executes each action in sequence. It handles aria-label, placeholder text, and visible labels, so it finds elements the same way a human would.

For workflows that span multiple pages — like filling out a multi-step signup form — OpenClaw navigates the entire flow, snapshotting each page to confirm state before proceeding.

Step 4: Screenshot for Verification and Monitoring

Screenshots provide visual confirmation that automation steps completed correctly

Screenshots provide visual confirmation that automation steps completed correctly

Screenshots serve two important purposes: verification and monitoring.

Verification: After executing actions, take a screenshot to confirm the result. If you automated a form submission, a screenshot of the confirmation page proves it worked.

Monitoring: Set up a cron job with OpenClaw to screenshot a dashboard, status page, or price tracker on a schedule. If something changes, OpenClaw can alert you.

"Every hour, screenshot https://status.myapp.com and notify me via Discord if anything on the page has changed since last check."

This kind of visual monitoring doesn't require you to know the page's HTML structure. OpenClaw compares screenshots over time and flags visual differences.

Screenshots are also useful for debugging — when an automation fails, a screenshot of the current state shows exactly where things went wrong.

Common Use Cases for Browser Automation in OpenClaw

The browser tool unlocks a wide range of practical workflows:

- Price monitoring — Track product prices across retailer websites and alert when they drop

- Lead research — Automatically visit LinkedIn profiles or company pages and extract contact info

- Competitive intelligence — Monitor competitor blogs, product pages, or job listings

- Form submission — Automate repetitive data entry across web portals

- Content scraping — Extract articles, reviews, or structured data for analysis

- Testing — Verify that your own web apps render correctly across different scenarios

- Screenshot reports — Generate visual snapshots of dashboards for weekly reports

For more on OpenClaw's automation capabilities, see the OpenClaw cron automation tutorial and subagent delegation guide.

Frequently Asked Questions

Common questions about browser automation in OpenClaw

Common questions about browser automation in OpenClaw

Does the browser tool work with sites that require login? Yes. You can provide credentials and OpenClaw will handle the login flow. For recurring automations, you can store session cookies to avoid re-authentication.

Can it handle CAPTCHAs? Standard browser fingerprinting CAPTCHAs (hCaptcha, reCAPTCHA v3) are partially handled by the browser profile. Hard CAPTCHAs requiring visual solving are not bypassed — OpenClaw will report the block and stop.

Is the browser isolated? The openclaw profile runs in an isolated Chromium instance with no access to your personal browser history or cookies. The chrome profile uses your real Chrome — use it carefully.

How does it differ from web_fetch?

web_fetch retrieves raw HTML/text via HTTP — fast but can't handle JavaScript-rendered content or interactive elements. The browser tool runs a full browser engine — slower but handles dynamic SPAs, buttons, forms, and anything a real browser can.

Can I use proxies with browser automation? Yes. OpenClaw supports proxy configuration at the browser level, including residential proxies. See TOOLS.md for proxy setup details.

Conclusion

Browser automation closes the gap between AI understanding and real-world web interaction

Browser automation closes the gap between AI understanding and real-world web interaction

OpenClaw's browser tool bridges the gap between AI reasoning and web interaction. Instead of manually clicking through pages or writing brittle scraper scripts, you describe what you want in plain language and the AI handles the rest.

The combination of snapshot (for reading), act (for interacting), and screenshot (for verifying) covers the full lifecycle of any web automation task. Pair it with OpenClaw cron jobs to schedule these workflows and you have a fully autonomous web agent running 24/7.

For the official Playwright documentation that powers the browser engine under the hood, see playwright.dev. For browser automation best practices, the Puppeteer documentation and Selenium guides are also worth reading.

Start with a simple use case — monitoring a page or extracting data from a site you visit regularly. Once you see how fast it works, you'll find browser automation tasks everywhere.

More Articles

The Ultimate OpenClaw AWS Setup Guide

The definitive guide to setting up OpenClaw on AWS. Includes spot instance configuration, cost optimization, and step-by-step instructions.

Building AI Workflows with Tool Chaining in OpenClaw

Master the art of chaining tools and function calls to build powerful multi-step AI automation workflows—from data extraction to content generation and deployment.

Cost Optimization Guide for Self-Hosted AI Assistants: Run Claude on a Budget

Practical strategies to reduce API costs for self-hosted AI assistants—smart model routing, caching, batching, and OpenClaw-specific optimizations to run Claude affordably.