Cursor vs GitHub Copilot: Which AI Coding Assistant Wins in 2026?

GitHub Copilot now has a free tier and has massively expanded its feature set. But is it as good as Cursor, the AI-first code editor that developers can't stop talking about?

After extensive testing with real-world coding tasks, the answer is clear: for serious development work, Cursor's agent mode and multi-file editing capabilities remain significantly ahead. But Copilot has its place, especially for developers on a budget.

This guide breaks down exactly where each tool excels, fails, and how to choose between them for your workflow.

The Multi-File Editing Test

Multi-file editing is where AI coding assistants prove their value

Multi-file editing is where AI coding assistants prove their value

The most important feature in modern AI coding assistants is the ability to edit multiple files intelligently. This is where Cursor shines and Copilot struggles.

Test 1: Adding API endpoints

I asked both tools to add the ability to read and write a comments collection to an existing admin API.

Cursor result: Found the correct files automatically, made the right updates to models and resolvers, hooked everything up correctly. Worked first time.

Copilot result: Failed to find the correct files. Made incorrect updates. Multiple attempts never worked correctly.

Test 2: Fixing a routing bug

A simple bug fix: change noClientSideRouting from true to false.

Cursor result: Found the variable, flipped the Boolean. Done in seconds.

Copilot result: Updated tons of unrelated files, got stuck in an infinite loading state on content.tsx, never actually fixed the bug.

The pattern is clear: Copilot's multi-file editing is unreliable, slow, and often broken. Cursor's agent mode handles these tasks effortlessly.

Agent Mode vs Manual Tagging

Copilot requires manual file tagging while Cursor finds files automatically

Copilot requires manual file tagging while Cursor finds files automatically

One of Cursor's biggest advantages is agent mode—you describe what you want, and it automatically searches your codebase to find relevant files.

Cursor's agent mode:

- Automatic codebase search

- Fast file discovery

- No manual tagging needed

- Just describe what you want

Copilot's approach:

- Requires manual file tagging

- Slow codebase search (sometimes minutes)

- Often returns errors instead of results

- You need to know which files to include

In real-world testing, Copilot's codebase search was "incredibly slow" compared to Cursor. Sometimes it took minutes just to complete a search, and other times it simply errored out.

The practical impact: With Cursor, you type what you want and it figures out the rest. With Copilot, you're constantly manually adding file references, which defeats much of the time-saving benefit.

Model Selection: Both Now Support Claude

Both tools now let you choose Claude 3.5 Sonnet for code generation

Both tools now let you choose Claude 3.5 Sonnet for code generation

One thing Copilot has improved: you can now select different AI models, including Claude 3.5 Sonnet—the same model Cursor uses by default.

Available models:

- Claude 3.5 Sonnet (best for coding)

- GPT-4o (Copilot's default)

- o1-mini

- o1-preview

Even when using Claude 3.5 Sonnet in Copilot, the results were worse than Cursor. This suggests the issue isn't the model—it's how Copilot handles context, file discovery, and code generation.

Testing with GPT-4o and o1 variants didn't improve results. The underlying problems with Copilot's multi-file editing persist regardless of model choice.

Features That Actually Work Well

Both tools excel at terminal commands and smaller features

Both tools excel at terminal commands and smaller features

Not everything about Copilot is bad. Some features work quite well:

Terminal AI commands: Both tools let you use AI in the terminal. Hit Command+I (Copilot) or Command+K (Cursor) to generate shell commands from natural language.

This feature works well in both tools, though Cursor's Command+K shortcut can be annoying since it conflicts with common terminal shortcuts.

Commit message generation: Both tools can auto-generate commit messages from your changes. Copilot's are more concise, Cursor's are more verbose. Both work fine.

Inline autocomplete: Copilot was the original AI autocomplete and still does a good job. Cursor takes it further with multi-line suggestions, but both handle basic completions well.

Custom instructions: Both support project-specific AI rules:

- Copilot:

.github/copilot-instructions.md - Cursor:

.cursorrules

Real-World Workflow Test

Testing both tools on a practical development task

Testing both tools on a practical development task

To simulate a realistic workflow, I started with a fresh Remix app and asked both tools to replace a placeholder with a table of users from an API.

With Cursor:

- Found the right location immediately

- Generated a complete table component

- Added hover effects

- Separated code into its own component file

- Clean, well-organized output

With Copilot:

- Required manual file tagging (automatic search failed)

- Took much longer to generate

- First attempt didn't even put the code in the right place

- Output was messier, all inline instead of componentized

- Missing styling and images

The verdict: Cursor produces cleaner, more functional code with less manual intervention.

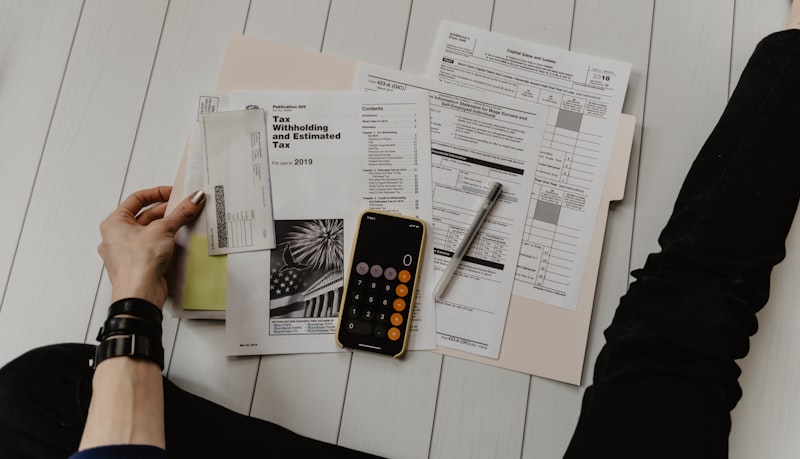

Pricing Comparison

GitHub Copilot offers a free tier while Cursor requires subscription

GitHub Copilot offers a free tier while Cursor requires subscription

Here's where Copilot has an advantage:

| Plan | GitHub Copilot | Cursor |

|---|---|---|

| Free | Yes (limited) | No |

| Individual | $10/month | $20/month |

| Business | $19/user/month | $40/user/month |

Copilot's free tier lets you try AI coding assistance without commitment. But the limits are tight—you'll likely need to upgrade after light usage.

Is the $10 difference worth it?

For professional developers: absolutely. Cursor's agent mode saves hours of coding time. If you value your time at all, the $20/month pays for itself quickly.

If you're on a tight budget, Windsurf offers similar quality to Cursor at a lower price point.

When to Use Each Tool

Match your workflow to the right tool

Match your workflow to the right tool

Use Cursor when:

- Multi-file refactoring is common

- You want automatic context discovery

- Agent mode workflows appeal to you

- You're willing to pay for productivity

- Reliability matters for your work

Use GitHub Copilot when:

- You're on a strict budget

- VS Code integration is essential

- Simple autocomplete is your main need

- You don't do much multi-file editing

- You're just exploring AI coding tools

Consider Windsurf when:

- You want Cursor-like quality at lower cost

- Multi-file editing is important

- You're open to a newer IDE

OpenClaw Integration for Development Workflows

Extend your AI coding workflow beyond the IDE

Extend your AI coding workflow beyond the IDE

Neither Cursor nor Copilot operates 24/7 or integrates with messaging platforms. That's where OpenClaw complements your development workflow:

- Ask coding questions via Telegram or Discord

- Get code reviews while away from your desk

- Automate development tasks with cron jobs

- Access Claude API through a unified interface

Your IDE's AI handles real-time coding assistance. OpenClaw handles everything else—making them complementary tools rather than competitors.

Conclusion

The right choice depends on your priorities and budget

The right choice depends on your priorities and budget

For serious development work, Cursor remains the clear winner. Its agent mode, automatic context discovery, and reliable multi-file editing make it worth the $20/month for anyone who codes regularly.

GitHub Copilot has improved significantly, but its multi-file editing features are still buggy, slow, and unreliable. The free tier makes it accessible, but you'll hit its limits quickly.

Recommendations:

- Professional developers → Cursor (

$20/month) - Budget-conscious developers → Windsurf (similar quality, lower price)

- Just exploring AI coding → GitHub Copilot free tier

- VS Code loyalists → Copilot (but consider the limitations)

The AI coding landscape evolves fast. But as of 2026, Cursor's lead in agent-based coding assistance remains substantial.

FAQ

Common questions about AI coding assistants

Common questions about AI coding assistants

Is GitHub Copilot free now?

Yes, Copilot has a free tier for VS Code users. However, the limits are restrictive and you'll likely need to upgrade for regular use.

Can I use Claude with GitHub Copilot?

Yes, Copilot now supports model selection including Claude 3.5 Sonnet. However, the implementation still has issues with file discovery and context handling.

Does Cursor work with VS Code extensions?

Yes, Cursor is a VS Code fork and supports most VS Code extensions. Your existing setup should transfer over.

Which is better for beginners?

For learning, both work fine for basic autocomplete. If you want to learn agent-based coding, Cursor's better interface makes it easier to understand what's happening.

Can I use both together?

You could run Copilot as an extension in Cursor, but it's redundant. Pick one and master it rather than splitting attention between both.

More Articles

The Ultimate OpenClaw AWS Setup Guide

The definitive guide to setting up OpenClaw on AWS. Includes spot instance configuration, cost optimization, and step-by-step instructions.

Building AI Workflows with Tool Chaining in OpenClaw

Master the art of chaining tools and function calls to build powerful multi-step AI automation workflows—from data extraction to content generation and deployment.

Cost Optimization Guide for Self-Hosted AI Assistants: Run Claude on a Budget

Practical strategies to reduce API costs for self-hosted AI assistants—smart model routing, caching, batching, and OpenClaw-specific optimizations to run Claude affordably.